Join thousands of students achieving their dreams with expert-led courses.

1M+ students are already learning — join them today.

Join free and unlock everything on EasyShiksha.

Takes less than 3 seconds — no password needed

Please enter a valid email address.

Checking your email…

Used only for verification & updates

Minimum 8 characters • Keep it secure

Almost done — just verify your number so we can send course updates and certificates.

Used only for verification & updates

We sent a 4-digit OTP to XXXXXXXXXX Edit

This helps us issue your certificates and updates

Incorrect OTP. Please try again.

Takes less than 10 seconds

Please select your country.

Please select your state.

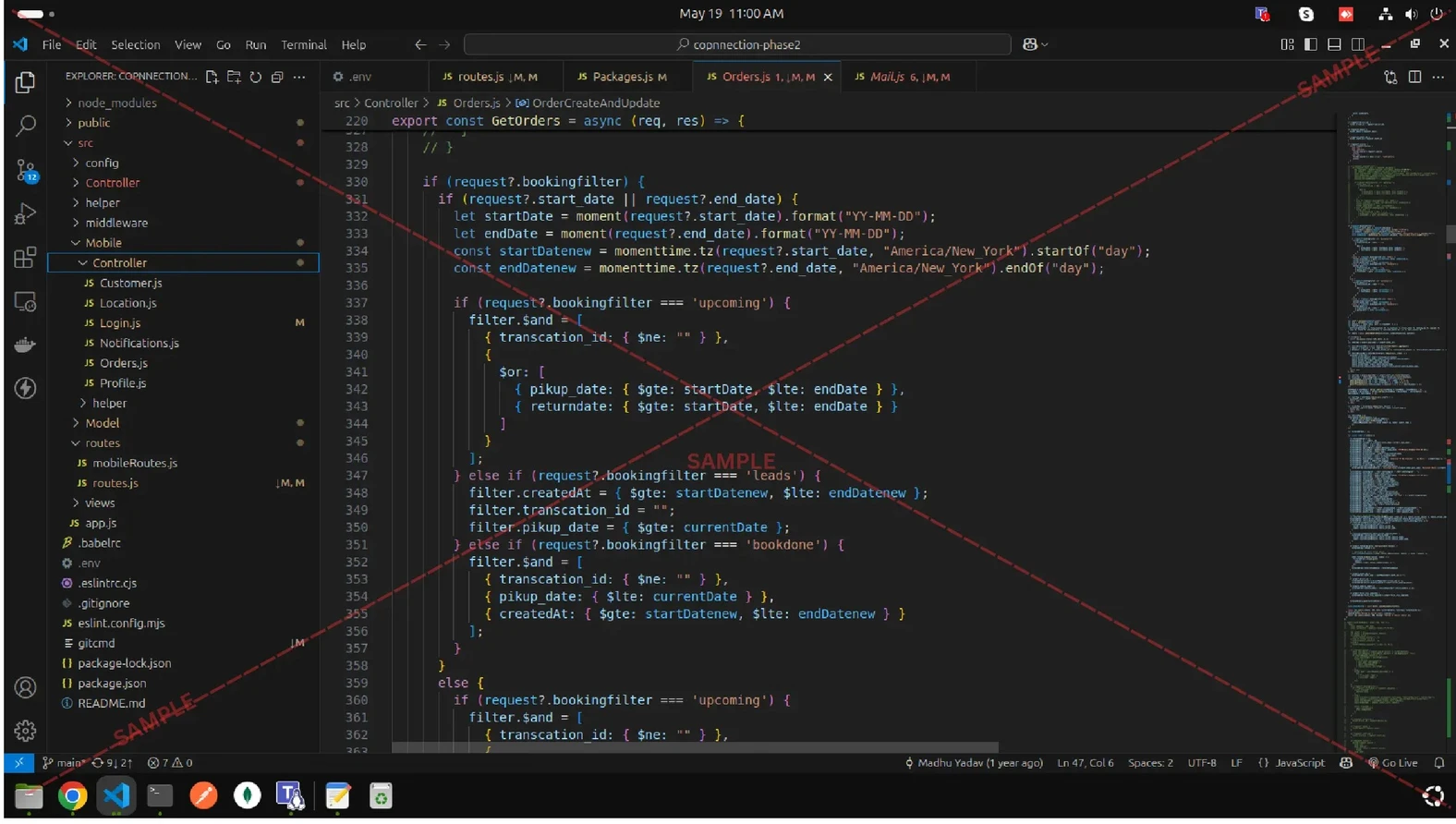

5 Different ways to synchronize data from MongoDB to ElasticSearch

Many times, you might find the need to migrate data from MongoDB to Elasticsearch in bulk. Elasticsearch facilitates full text search of your data, while MongoDB excels at storing it. Using MongoDB to store your data and Elasticsearch for search is a common architecture. This tutorial shows you how to use different tools or plugins to quickly copy or synchronize data from MongoDB to Elasticsearch.

Mongo Connector

mongo-connector is a real-time sync service as a package of python, which is a generic connection system that you can use to integrate MongoDB with another system with simple CRUD operational semantics. It creates a pipeline from a mongodb cluster to one or more target systems, such as Solr, Elasticsearch, or another MongoDB cluster. mongo-connector needs mongo to run in replica-set mode, sync data in mongo to the target then tails the mongo oplog, keeping up with operations in MongoDB in real-time. It needs a package named “elastic2_doc_manager” to write data to ES. mongo-connector copies your documents from MongoDB to your target system. Afterwards, it constantly performs updates on the target system to keep MongoDB and the target in sync.

Elasticsearch-reverse-mongodb:

Elasticsearch provides ability to enhance the basic functionality by plugins, which are easy to use and develop. They can be used for analysis, discovery, monitoring, data synchronization and many others. Rivers is a group of plugins used for data synchronization between database and elasticsearch. There is a mongoDB river plugin for data synchronization, named “elasticsearch-river-mongodb”.

When document is inserted to MongoDB, database is created (if it doesn’t exist), along with schema for that particular record. Then, our data is stored. When more data comes in, the schema is updated. After inserting document in MongoDB configured as replica set, it is also stored in oplog collection.The mentioned collection is operations log configured as capped collection, which keeps a rolling record of all operations that modify the data stored in databases.

Logstach

Logstash is an open source data collection engine with real-time pipelining capabilities. Logstash can dynamically unify data from disparate sources and normalize the data into destinations of your choice.We can take advantage of buffering , inputting, outputting and filtering abilities from logstash by adding a mongo input and ES output plugin to get this job done. JDBC input plugin is one of the choices, but it needs JDBC driver support.

Transpoter

Transporter tool is a good choice to synchronize data once you want to export mongo data to another ES server. Transporter also can export data from or to other type of data store.

Mongostastic

We can use Mongoosastic module for storing-in-both-sides purpose when we use Nodejs as a web server container. When one document needs to be stored, Mongoosastic can commit the changes to both mongo and ES.

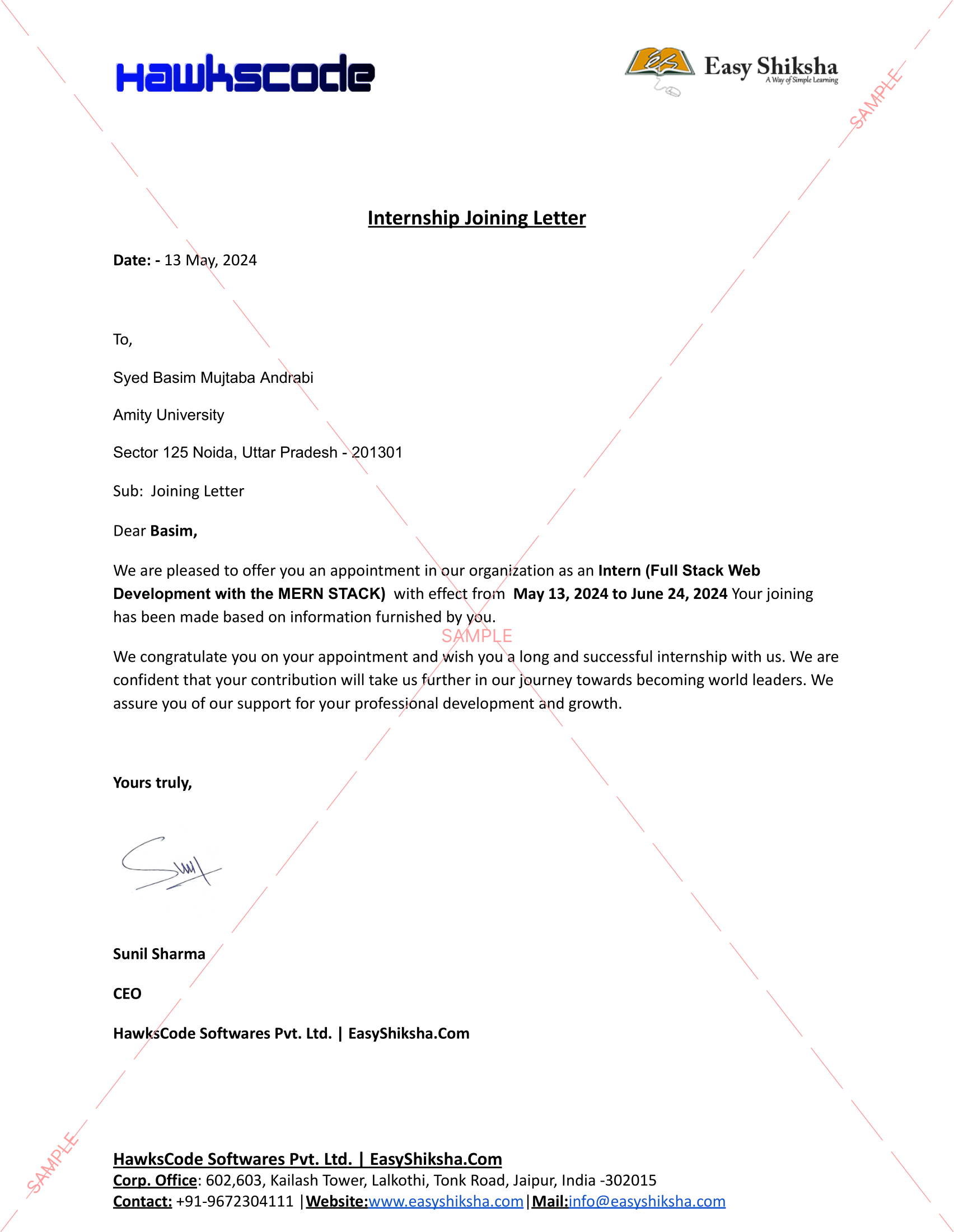

So these are the five different ways to synchronize data from MongoDB to ElasticSearch. To learn more visit HawksCode and Easyshiksha.

More News Click Here

Tags :

New Courses

Opamp Projects: Design & Simulate Operational Amplifier Based Projects on Proteus & LTSpice Software

Biomedical Project: Design Digital Thermometer with Atmega32, Arduino & LM35 Temperature Sensor

Fusion 360 Learn CAD and Earn Money Online

Analog Electronic Lab Based Course on MOSFETs using MULTISIM

Discover thousands of colleges and courses, enhance skills with online courses and internships, explore career alternatives, and stay updated with the latest educational news..

Gain high-quality, filtered student leads, prominent homepage ads, top search ranking, and a separate website. Let us actively enhance your brand awareness.

Trusted by Standards & Global Compliance Frameworks

Built under internationally recognized standards for quality, information security, educational excellence and business continuity.

ISO 9001:2015 | ISO/IEC 27001:2022 | ISO 21001:2018 | ISO 22301:2019 | DPIIT Recognized Startup | MSME Registered